echo “y” | gh auth logout –hostname github.com

Menu

Home

Issues

8? Pull requests

Projects

Discussions

Codespaces

& Copilot

Explore

® Marketplace

Repositories

Ray2407|250527b

Ray 2407/Ray250527

Ray2407/250525

Actions · Crh2123/ChatGPT · GitHub

Marketing zip file

Affiliate links

LemonSqueezy – Sell digital products or SaaS

Gumroad – Sell templates, bots, or guides

PartnerStack – Join SaaS affiliate programs

Impact – Promote big brand products

Rakuten Advertising – Retail affiliate network

Ko-fi – Accept donations or tips

Buy Me a Coffee – Monetize support

Notion – Build and sell templates

Tally – Free form builder w/ payments

Popsy – Create simple landing pages

Make – No-code automation builder

Bubble – Build apps without coding

Carrd – One-page sites for products

Dev.to – Post blogs with affiliate links

Hashnode – Blog from GitHub repos

Replit – Host bots or code tools

CodeSandbox – Embed live code demos

Netlify – Free web hosting

UserTesting – Get paid for testing sites

Prolific – Paid academic-style surveys

Remotasks – AI training tasks

Fiverr – Sell AI or coding gigs

Toptal – Premium freelance jobs

PeoplePerHour – Freelance marketplace

Contra – No-fee freelance platform

1 June, 2025 06:24

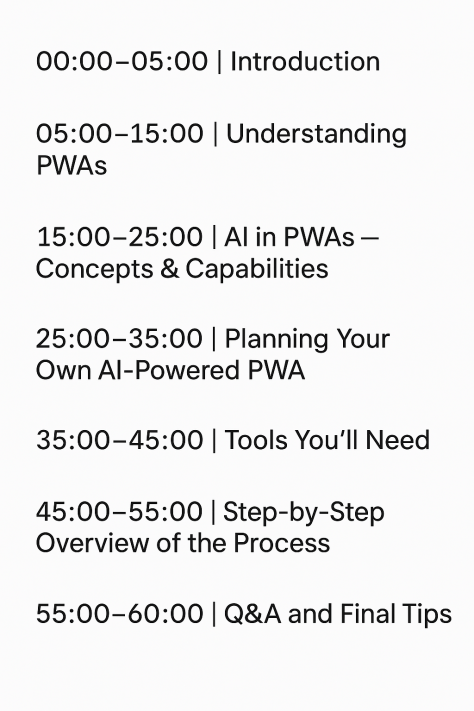

One hour class breakdown

Token Exposure Warning

Python crawler

import requests

from bs4 import BeautifulSoup

import json

import pandas as pd

class WebCrawler:

def __init__(self, base_url):

self.base_url = base_url

def fetch_page(self, url):

try:

response = requests.get(url)

response.raise_for_status() # Raise HTTPError for bad responses return response.text

except requests.exceptions.RequestException as e:

print(f”Error fetching {url}: {e}”)

return None

def parse_html(self, html_content, tag, attributes={}):

soup = BeautifulSoup(html_content, ‘html.parser’)

return soup.find_all(tag, attrs=attributes)

def scrape(self, page_path=’/’):

url = f”{self.base_url}{page_path}”

html_content = self.fetch_page(url)

if not html_content:

return []

# Example: Extract all links

links = self.parse_html(html_content, ‘a’, {‘href’: True}) return [link[‘href’] for link in links if ‘href’ in link.attrs]

def fetch_api(self, endpoint):

try:

response = requests.get(f”{self.base_url}{endpoint}”) response.raise_for_status()

return response.json()

except requests.exceptions.RequestException as e:

print(f”Error fetching API {endpoint}: {e}”)

return {}

def store_data_csv(self, data, filename):

df = pd.DataFrame(data)

df.to_csv(filename, index=False)

print(f”Data saved to {filename}”)

def store_data_json(self, data, filename):

with open(filename, ‘w’) as f:

json.dump(data, f, indent=4)

print(f”Data saved to {filename}”)

if __name__ == “__main__”:

# Example usage

crawler = WebCrawler(“https://example.com“)

# Scraping links from a webpage

links = crawler.scrape(“/”)

print(“Scraped Links:”, links)

# Fetching data from an API endpoint

api_data = crawler.fetch_api(“/api/data”)

print(“API Data:”, api_data)

# Storing scraped data into CSV

crawler.store_data_csv([{“link”: link} for link in links], “links.csv”)

# Storing API data into JSON

crawler.store_data_json(api_data, “api_data.json”)